pig简介

本文共 10278 字,大约阅读时间需要 34 分钟。

转自:http://www.aboutyun.com/thread-6713-1-1.html

pig简介

pig是hadoop上层的衍生架构,与hive类似。对比hive(hive类似sql,是一种声明式的语言),pig是一种过程语言,类似于存储过程一步一步得进行数据转化。pig简单操作

1.从文件导入数据

1)Mysql (Mysql需要先创建表).

CREATE TABLE TMP_TABLE(USER VARCHAR(32),AGE INT,IS_MALE BOOLEAN);

CREATE TABLE TMP_TABLE_2(AGE INT,OPTIONS VARCHAR(50)); -- 用于Join

LOAD DATA LOCAL INFILE '/tmp/data_file_1' INTO TABLE TMP_TABLE ;

LOAD DATA LOCAL INFILE '/tmp/data_file_2' INTO TABLE TMP_TABLE_2;

2)Pig

tmp_table = LOAD '/tmp/data_file_1' USING PigStorage('\t') AS (user:chararray, age:int,is_male:int);

tmp_table_2= LOAD '/tmp/data_file_2' USING PigStorage('\t') AS (age:int,options:chararray);

2.查询整张表

1)Mysql

SELECT * FROM TMP_TABLE;

2)Pig

DUMP tmp_table;

3. 查询前50行

1)Mysql

SELECT * FROM TMP_TABLE LIMIT 50;

2)Pig

tmp_table_limit = LIMIT tmp_table 50;

DUMP tmp_table_limit;

4.查询某些列

1)Mysql

SELECT USER FROM TMP_TABLE;

2)Pig

tmp_table_user = FOREACH tmp_table GENERATE user;

DUMP tmp_table_user;

5. 给列取别名

1)Mysql

SELECT USER AS USER_NAME,AGE AS USER_AGE FROM TMP_TABLE;

2)Pig

tmp_table_column_alias = FOREACH tmp_table GENERATE user AS user_name,age AS user_age;

DUMP tmp_table_column_alias;

6.排序

1)Mysql

SELECT * FROM TMP_TABLE ORDER BY AGE;

2)Pig

tmp_table_order = ORDER tmp_table BY age ASC;

DUMP tmp_table_order;

7.条件查询

1)Mysql

SELECT * FROM TMP_TABLE WHERE AGE>20;

2) Pig

tmp_table_where = FILTER tmp_table by age > 20;

DUMP tmp_table_where;

8.内连接Inner Join

1)Mysql

SELECT * FROM TMP_TABLE A JOIN TMP_TABLE_2 B ON A.AGE=B.AGE;

2)Pig

tmp_table_inner_join = JOIN tmp_table BY age,tmp_table_2 BY age;

DUMP tmp_table_inner_join;

9.左连接Left Join

1)Mysql

SELECT * FROM TMP_TABLE A LEFT JOIN TMP_TABLE_2 B ON A.AGE=B.AGE;

2)Pig

tmp_table_left_join = JOIN tmp_table BY age LEFT OUTER,tmp_table_2 BY age;

DUMP tmp_table_left_join;

10.右连接Right Join

1)Mysql

SELECT * FROM TMP_TABLE A RIGHT JOIN TMP_TABLE_2 B ON A.AGE=B.AGE;

2)Pig

tmp_table_right_join = JOIN tmp_table BY age RIGHT OUTER,tmp_table_2 BY age;

DUMP tmp_table_right_join;

11.全连接Full Join

1)Mysql

SELECT * FROM TMP_TABLE A JOIN TMP_TABLE_2 B ON A.AGE=B.AGE

UNION SELECT * FROM TMP_TABLE A LEFT JOIN TMP_TABLE_2 B ON A.AGE=B.AGE

UNION SELECT * FROM TMP_TABLE A RIGHT JOIN TMP_TABLE_2 B ON A.AGE=B.AGE;

2)Pig

tmp_table_full_join = JOIN tmp_table BY age FULL OUTER,tmp_table_2 BY age;

DUMP tmp_table_full_join;

12.同时对多张表交叉查询

1)Mysql

SELECT * FROM TMP_TABLE,TMP_TABLE_2;

2)Pig

tmp_table_cross = CROSS tmp_table,tmp_table_2;

DUMP tmp_table_cross;

13.分组GROUP BY

1)Mysql

SELECT * FROM TMP_TABLE GROUP BY IS_MALE;

2)Pig

tmp_table_group = GROUP tmp_table BY is_male;

DUMP tmp_table_group;

14.分组并统计

1)Mysql

SELECT IS_MALE,COUNT(*) FROM TMP_TABLE GROUP BY IS_MALE;

2)Pig

tmp_table_group_count = GROUP tmp_table BY is_male;

tmp_table_group_count = FOREACH tmp_table_group_count GENERATE group,COUNT($1);

DUMP tmp_table_group_count;

15.查询去重DISTINCT

1)MYSQL

SELECT DISTINCT IS_MALE FROM TMP_TABLE;

2)Pig

tmp_table_distinct = FOREACH tmp_table GENERATE is_male;

tmp_table_distinct = DISTINCT tmp_table_distinct;

DUMP tmp_table_distinct;

---------------------------------------------------------------------------------------------------------------------------------------------------

上面简单操作,下面需要进一步了解pig支持的内容:

pig支持数据类型

double > float > long > int > bytearray

tuple|bag|map|chararray > bytearray

double float long int chararray bytearray都相当于pig的基本类型

tuple相当于数组 ,但是可以类型不一,举例('dirkzhang','dallas',41)

Bag相当于tuple的一个集合,举例{('dirk',41),('kedde',2),('terre',31)},在group的时候会生成bag

Map相当于哈希表,key为chararray,value为任意类型,例如['name'#dirk,'age'#36,'num'#41

nulls 表示的不只是数据不存在,他更表示数据是unkown

pig latin语法

1:load

LOAD 'data' [USING function] [AS schema];

例如:

load = LOAD 'sql://{SELECT MONTH_ID,DAY_ID,PROV_ID FROM zb_d_bidwmb05009_010}' USING com.xxxx.dataplatform.bbdp.geniuspig.VerticaLoader('oracle','192.168.6.5','dev','1522','vbap','vbap','1') AS (MONTH_ID:chararray,DAY_ID:chararray,PROV_ID:chararray);

Table = load ‘url’ as (id,name…..); //table和load之间除了等号外 还必须有个空格 不然会出错,url一定要带引号,且只能是单引号。

2:filter

alias = FILTER alias BY expression;

Table = filter Table1 by + A; //A可以是 id > 10;not name matches ‘’,is not null 等,可以用and 和or连接各条件

例如:

filter = filter load20 by ( MONTH_ID == '1210' and DAY_ID == '18' and PROV_ID == '010' );

3:group

alias = GROUP alias { ALL | BY expression} [, alias ALL | BY expression …] [USING 'collected' | 'merge'] [PARTITION BY partitioner] [PARALLEL n];

pig的分组,不仅是数据上的分组,在数据的schema形式上也进行分组为groupcolumn:bag

Table3 = group Table2 by id;也可以Table3 = group Table2 by (id,name);括号必须加

可以使用ALL实现对所有字段的分组

4:foreach

alias = FOREACH alias GENERATE expression [AS schema] [expression [AS schema]….];

alias = FOREACH nested_alias {

alias = {nested_op | nested_exp}; [{alias = {nested_op | nested_exp}; …]

GENERATE expression [AS schema] [expression [AS schema]….]

};

一般跟generate一块使用

Table = foreach Table generate (id,name);括号可加可不加。

avg = foreach Table generate group, AVG(age); MAX ,MIN..

在进行数据过滤时,建议尽早使用foreach generate将多余的数据过滤掉,减少数据交换

5:join

Inner join Syntax

alias = JOIN alias BY {expression|'('expression [, expression …]')'} (, alias BY {expression|'('expression [, expression …]')'} …) [USING 'replicated' | 'skewed' | 'merge' | 'merge-sparse'] [PARTITION BY partitioner] [PARALLEL n];

Outer join Syntax

alias = JOIN left-alias BY left-alias-column [LEFT|RIGHT|FULL] [OUTER], right-alias BY right-alias-column [USING 'replicated' | 'skewed' | 'merge'] [PARTITION BY partitioner] [PARALLEL n];

join/left join / right join

daily = load 'A' as (id,name, sex);

divs = load 'B' as (id,name, sex);

join

jnd = join daily by (id, name), divs by (id, name);

left join

jnd = join daily by (id, name) left outer, divs by (id, name);

也可以同时多个变量,但只用于inner join

A = load 'input1' as (x, y);

B = load 'input2' as (u, v);

C = load 'input3' as (e, f);

alpha = join A by x, B by u, C by e;

6: union

alias = UNION [ONSCHEMA] alias, alias [, alias …];

union 相当与sql中的union,但与sql不通的是pig中的union可以针对两个不同模式的变量:如果两个变量模式相同,那么union后的变量模式与 变量的模式一样;如果一个变量的模式可以由另一各变量的模式强制类型转换,那么union后的变量模式与转换后的变量模式相同;否则,union后的变量 没有模式。

A = load 'input1' as (x:int, y:float);

B = load 'input2' as (x:int, y:float);

C = union A, B;

describe C;

C: {x: int,y: float}

A = load 'input1' as (x:double, y:float);

B = load 'input2' as (x:int, y:double);

C = union A, B;

describe C;

C: {x: double,y: double}

A = load 'input1' as (x:int, y:float);

B = load 'input2' as (x:int, y:chararray);

C = union A, B;

describe C;

Schema for C unknown.

注意:在pig 1.0中 执行不了最后一种union。

如果需要对两个具有不通列名的变量union的话,可以使用onschema关键字

A = load 'input1' as (w: chararray, x:int, y:float);

B = load 'input2' as (x:int, y:double, z:chararray);

C = union onschema A, B;

describe C;

C: {w: chararray,x: int,y: double,z: chararray}

join和union之后alias的别名会变

7:Dump

dump alias

用于在屏幕上显示数据。

8:Order by

alias = ORDER alias BY { * [ASC|DESC] | field_alias [ASC|DESC] [, field_alias [ASC|DESC] …] } [PARALLEL n];

A = order Table by id desc;

9:distinct

A = distinct alias;

10:limit

A = limit alias 10;

11:sample

SAMPLE alias size;

随机抽取指定比例(0到1)的数据。

some = sample divs 0.1;

13:cross

alias = CROSS alias, alias [, alias …] [PARTITION BY partitioner] [PARALLEL n];

将多个数据集中的数据按照字段名进行同值组合,形成笛卡尔积。

--cross.pig

daily = load 'NYSE_daily' as (exchange:chararray, symbol:chararray,date:chararray, open:float, high:float, low:float,

close:float, volume:int, adj_close:float);

divs = load 'NYSE_dividends' as (exchange:chararray, symbol:chararray,date:chararray, dividends:float);

tonsodata = cross daily, divs parallel 10;

15:split

Syntax

SPLIT alias INTO alias IF expression, alias IF expression [, alias IF expression …] [, alias OTHERWISE];

A = LOAD 'data' AS (f1:int,f2:int,f3:int);

DUMP A;

(1,2,3)

(4,5,6)

(7,8,9)

SPLIT A INTO X IF f1<7, Y IF f2==5, Z IF (f3<6 OR f3>6);

DUMP X;

(1,2,3)

(4,5,6)

DUMP Y;

(4,5,6)

DUMP Z;

(1,2,3)

(7,8,9)

16:store

Store … into … Using…

pig在别名维护上:

1、join

如e = join d by name,b by name;

g = foreach e generate $0 as one:chararray, $1 as two:int, $2 as three:chararray,$3 asfour:int;

他生成的schemal:

e: {d::name: chararray,d::position: int,b::name: chararray,b::age: int}

g: {one: chararray,two: int,three: chararray,four: int}

2、group

B = GROUP A BY age;

----------------------------------------------------------------------

| B | group: int | A: bag({name: chararray,age: int,gpa: float}) |

----------------------------------------------------------------------

| | 18 | {(John, 18, 4.0), (Joe, 18, 3.8)} |

| | 20 | {(Bill, 20, 3.9)} |

----------------------------------------------------------------------

(18,{(John,18,4.0F),(Joe,18,3.8F)})

pig udf自定义

pig支持嵌入user defined function,一个简单的udf 继承于evalFunc,通常用在filter,foreach中

----------------------------------------------------------------------------------------------------------------------------------------------------

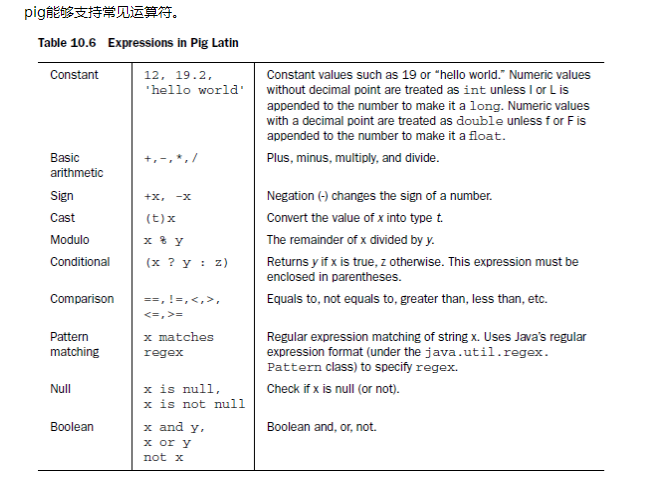

如果我们知道nosql和传统数据库是有差别的,那么他们的都支持什么运算符和函数

4.6 Retional operators

首先编写两个数据文件A:

0,1,2

1,3,4

数据文件B:

0,5,2

1,7,8

运行pig:

xuqiang@ubuntu:~/hadoop/src/pig/pig-0.8.1/tutorial/pigtmp$ pig -x local

2011-06-05 18:46:54,039 [main] INFO org.apache.pig.Main - Logging error messages to: /home/xuqiang/hadoop/src/pig/pig-0.8.1/tutorial/pigtmp/pig_1307324814030.log

2011-06-05 18:46:54,324 [main] INFO org.apache.pig.backend.hadoop.executionengine.HExecutionEngine - Connecting to hadoop file system at: file:///

grunt>

加载数据A:

grunt> a = load 'A' using PigStorage(',') as (a1:int, a2:int, a3:int);

加载数据B:

grunt> b = load 'B' using PigStorage(',') as (b1:int, b2:int, b3:int);

求a,b的并集:

grunt> c = union a, b;

grunt> dump c;

(0,5,2)

(1,7,8)

(0,1,2)

(1,3,4)

将c分割为d和e,其中d的第一列数据值为0,e的第一列的数据为1($0表示数据集的第一列):

grunt> split c into d if $0 == 0, e if $0 == 1;

查看d:

grunt> dump d;

(0,1,2)

(0,5,2)

查看e:

(1,3,4)

(1,7,8)

选择c中的一部分数据:

grunt> f = filter c by $1 > 3;

查看数据f:

grunt> dump f;

(0,5,2)

(1,7,8)

对数据进行分组:

grunt> g = group c by $2;

查看g:

grunt> dump g;

(2,{(0,1,2),(0,5,2)})

(4,{(1,3,4)})

(8,{(1,7,8)})

当然也能够将所有的元素集合到一起:

grunt> h = group c all;

grunt> dump h;

(all,{(0,1,2),(1,3,4),(0,5,2),(1,7,8)})

查看h中元素个数:

grunt> i = foreach h generate COUNT($1);

查看元素个数:

grunt> dump i;

这里可能出现Could not resolve counter using imported: [, org.apache.pig.built in., org.apache.pig.impl.builtin. ]的情况,这是需要使用register命令来注册pig对应的jar版本。

接下俩试一下jon操作:

grunt> j = join a by $2, b by $2;

该操作类似于sql中的连表查询,这是的条件是$2 == $2。

取出c的第二列$1和$1 * $2,将这两列保存在k中:

grunt> k = foreach c generate $1, $1 * $2;

查看k的内容:

grunt> dump k;

(5,10)

(7,56)

(1,2)

(3,12)

转载地址:http://ccyxi.baihongyu.com/

你可能感兴趣的文章

实验2-6 字符型数据的输入输出

查看>>

实验3-5 编程初步

查看>>

实验4-1 逻辑量的编码和关系操作符

查看>>

实验5-2 for循环结构

查看>>

实验5-3 break语句和continue语句

查看>>

实验5-4 循环的嵌套

查看>>

实验5-5 循环的合并

查看>>

实验5-6 do-while循环结构

查看>>

实验5-7 程序调试入门

查看>>

实验5-8 综合练习

查看>>

第2章实验补充C语言中如何计算补码

查看>>

深入入门正则表达式(java) - 命名捕获

查看>>

使用bash解析xml

查看>>

android系统提供的常用命令行工具

查看>>

【Python基础1】变量和字符串定义

查看>>

【Python基础2】python字符串方法及格式设置

查看>>

【Python】random生成随机数

查看>>

【Python基础3】数字类型与常用运算

查看>>

【Python基础4】for循环、while循环与if分支

查看>>

【Python基础6】格式化字符串

查看>>